Getting really, really robust

Athey et al.'s Triply Robust Estimator

quandt_34

Applied economists love panel data because panel data let us watch the same units over time. States over years. Firms over quarters. Countries over decades. That gives us two margins of variation (across units and across time) and the hope that we can say something causal rather than merely decorative.

But the whole enterprise rises or falls on the counterfactual. We observe what happened after treatment. We do not observe what would have happened to that same unit in that same period absent treatment. Every estimator in this literature is, at bottom, a machine for filling in that missing cell.

And that is exactly where misspecification creeps in. If California changes a prison policy and Nevada does not, comparing California after the policy to Nevada after the policy can be a numerically elegant leap of faith. California and Nevada differ in all sorts of ways that matter for crime, wages, output, mortality, or whatever outcome we care about. The real problem is not “finding another state.” The real problem is estimating California-without-the-policy.

This post walks through a recent working paper by Susan Athey, Guido Imbens, Zhaonan Qu, and Davide Viviano: “Triply Robust Panel Estimators.” As you can tell by the title, we’re talking some serious robustness.

The paper introduces TROP, or the **Triply RObust Panel** estimator, which combines unit weights, time weights, and a low-rank outcome model, with the tuning parameters chosen by leave-one-out cross-validation on untreated cells. In the paper’s main semi-synthetic simulations, the authors mostly set treatment effects to zero and compare how well each estimator recovers the missing untreated outcome; on that metric TROP is the best performer in 20 of 21 benchmark designs.

To make this concrete, I am going to use a toy spreadsheet. This is a didactic spreadsheet, not a production estimator. It is meant to show the anatomy of the problem. The sheet has three observed covariates because real panels do, but the baseline TROP estimator in the paper does not require covariates; the paper adds covariates later as an extension.

Set up

The workbook has a `panel_data` sheet with 8 units, A through H, over 100 periods. Unit A is treated from period 51 onward. Unit B is treated from period 67 onward. Units C through H are never treated. Treatment is binary and absorbing.

The untreated outcome is generated by

So there are five moving parts:

1. a unit fixed effect,

2. a time fixed effect,

3. three observed covariates,

4. an unobserved common factor,

5. a unit-specific loading that tells us how strongly each unit responds to that factor.

That last term (before the error term) is the interactive fixed effect. It is the troublemaker.

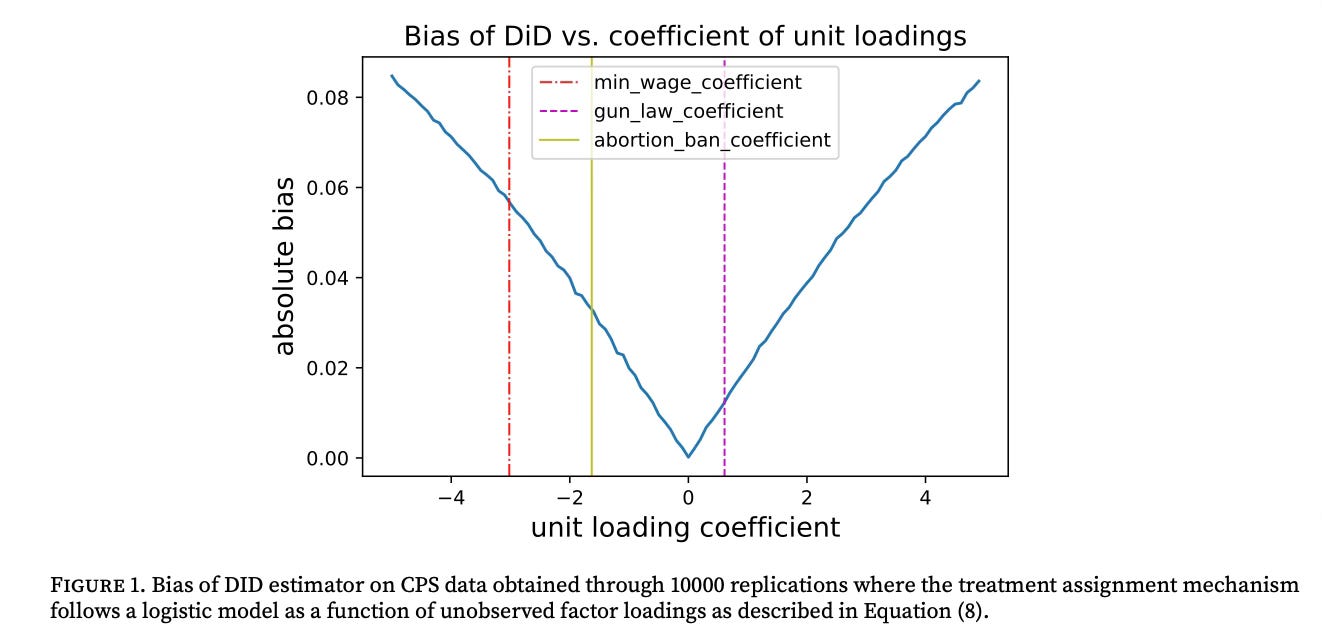

I made units A and B carry large factor loadings (2.0 and 1.7) while the donor units C through H range from 0.4 down to -0.5. It means the treated units have untreated trends that are hard to match with simple averages and hard to reproduce with convex combinations of donor units. In other words, the sheet is designed to frustrate plain DiD and basic synthetic control for reasons that are structural rather than cute.

I also left the hidden machinery visible. Columns K–M show the latent factor F_t, the unit loading gamma_i, and their interaction. Column N is the true untreated outcome. Column O is the treatment effect. Column P is the observed outcome. An actual estimator does not get to see columns K–O. You do. That is the pedagogical trick.

The usual estimators

All of the familiar panel estimators differ mainly in how they use information across units and across time to fill in the missing untreated outcome.

Difference-in-differences is the blunt instrument. In its plain version, it effectively gives equal weight to all control units and equal weight to all pre-treatment periods. No flexible outcome model. Just means stacked on means. If untreated trends really are parallel, fine. If they are not, the estimate absorbs trend differences and calls them treatment.

Synthetic control is more selective. It chooses unit weights to make the pre-treatment path of a treated unit look like a weighted average of untreated units. It is much smarter about units than plain DiD. But time is still handled fairly rigidly, and the method works only if the treated unit can actually be approximated by a convex combination of donors.

Synthetic difference-in-differences adds time weights to unit weights. That is an important improvement. It says not every donor unit is equally informative and not every pre-treatment period is equally informative. Better. Still, in the baseline comparison here, there is no flexible low-rank outcome model doing the heavy lifting.

Matrix completion goes the other way. It leans into outcome modeling by assuming the untreated outcome matrix is approximately low-rank. That is a factor-model move.

TROP does not force you to choose one or two. It uses unit weights, time weights, and an outcome model together.

That last sentence is the paper’s real contribution. TROP is not just one more estimator. It is a framework that contains several familiar estimators as special cases.

Factor structure

The clean way to say the problem for the usual estimators is: untreated outcomes often depend on interactive fixed effects.

Instead of writing

write

The term L_{it} is the latent factor structure. Lambda_t is a vector of common shocks or factors. Gamma_i is a vector of unit-specific loadings. Same shock, different exposure. Same wave, different surfboard.

In fact, the beach analogy is useful. Imagine a lineup of surfers. The waves differ over time. The boards differ across surfers. If one surfer catches bigger, faster rides, is that because the surfer is better or because that surfer happened to be sitting where the waves were breaking hardest and had a board built for them? The waves are the factors. The boards are the loadings.

DiD effectively assumes everybody faces the same waves in the same way. Synthetic control says maybe I can reconstruct your position in the lineup by mixing together the other surfers. Matrix completion says let’s estimate the wave structure directly. TROP says: I am not betting the farm on one of those stories if I can use all three.

In the toy sheet, the factor structure is visible in columns K–M, and it explains exactly why simple designs go sideways. A and B simply load much more heavily on the common factor than the donors do. That breaks parallel trends. It also puts the treated units close to or outside the donor span, which is bad news for plain synthetic control.

Just trop

For a treated cell (i,t), or a unit at a given time, TROP fits untreated outcomes by solving a weighted low-rank problem of the form

Here:

- omega^{i,t}_j are unit weights,

- theta^{i,t}_s are time weights,

- L is the low-rank outcome component,

- lambda_{nn} controls how aggressively we shrink toward a low-rank structure.

The paper’s practical specification uses exponential weights,

where the unit distance is built from untreated outcome differences over periods when both units are untreated. So nearby periods get more weight, and units that look more like the treated unit get more weight.

The tuning parameters lambda_{time}, lambda_{unit}, lambda_{nn}) are then chosen by leave-one-out cross-validation: temporarily treat untreated cells as if they were treated, predict them without using their own information, square the resulting placebo treatment effects, and pick the tuning parameters that minimize that out-of-sample criterion.

Cross-validation makes the weights data-driven.

Once the model is fit, the estimated treatment effect for a treated cell is the observed outcome minus the estimated untreated outcome. With multiple treated units and periods the paper estimates a cell-specific effect for each treated unit-time pair and then averages those effects over treated cells. That is how TROP handles staggered or more general assignment patterns without pretending the world contains only one treated unit in one post-period.

A neat feature is that familiar estimators fall out as limiting cases. If all weights are uniform and lambda_{nn}=\infty, you are back in the TWFE/DiD world. If all weights are uniform but lambda_{nn}<\infty, you are doing matrix completion. If lambda_{nn}=\infty but the weights are learned, you land in SC/SDID territory.

Doubly robust isn’t enough; bring in triply robust. The theoretical claim in the paper is that any bias is bounded by

So if any one of those channels is essentially zero, the whole bias term collapses. If the unit weights get you excellent balance, you can survive some misspecification in the time weights and the outcome model. If the outcome model is right, you can survive imperfect balancing. That is the sense in which the estimator is triply robust.

Not bulletproof. But we’re getting close.

To the spreadsheet!

The spreadsheet is good for three things: seeing the pathology, computing simple estimators, and measuring bias because in a toy DGP we actually know the truth.

Because the sheet contains both the true untreated outcome and the treatment effect, you can compute the true ATT directly.

In Excel, one direct formula is

=AVERAGEIFS(panel_data!$O:$O,panel_data!$E:$E,1)

because column O is treatment_effect_tau and column E is treated.

An equivalent formula is

`=AVERAGEIFS(panel_data!$P:$P,panel_data!$E:$E,1)-AVERAGEIFS(panel_data!$N:$N,panel_data!$E:$E,1)`

because column P is the observed outcome and column N is the true untreated outcome.

In this toy panel, the true ATT is **2.5976**.

For a spreadsheet demonstration, the cleanest version is to treat A and B as two cohorts and use only the never-treated units C–H as donors. That is a simplification for transparency. You can make the donor pool fancier later.

For unit A, define the pre-period as 1–50 and the post-period as 51–100. For unit B, define the pre-period as 1–66 and the post-period as 67–100.

Then compute

and the same for B.

In Excel this is just `AVERAGEIFS` work. Then take the overall ATT as the post-period-count-weighted average of the A and B estimates:

On this toy panel, that simple DiD comes out around 5.90, far above the true 2.60. That is the interactive fixed effect showing up.

Do the same thing cohort by cohort.

For unit A, create six donor weights for C–H. Constrain them to be nonnegative and to sum to one. Use Solver to minimize the pre-treatment fit criterion

Then use those weights to predict the post-treatment counterfactual:

Compute the average post-treatment gap for A. Then repeat the same exercise for B using periods 1–66 as the pre-treatment window and 67–100 as the post-treatment window.

The useful spreadsheet formula for the objective is a weighted pre-fit SSE. If the treated pre-treatment path is in one column and the donor matrix is in a contiguous block, Solver can minimize a `SUMXMY2` expression built on the treated series and the donor-weighted synthetic series.

On this toy panel, a bare-bones SC of that sort will overshoot badly since A and B have much larger factor loadings than the donor pool, so the synthetic can fit only by doing its best impression of a unit it fundamentally is not.

I’m going to pass over how to do this in Excel for now since it is a bit of a process and will double the length of this post. So now to TROP.

For a treated cell (i,t), compute distances between the treated unit and each donor unit using untreated overlapping periods. A simple helper formula is the root mean squared gap over the relevant untreated periods. Then choose trial values of lambda_unit and lambda_time in control cells and calculate

Normalize them if you want the weights to sum to one for display and comparison.

Then do the paper’s placebo trick in spreadsheet form: temporarily hide an untreated cell, pretend it was treated, predict it using your chosen weights, square the implied placebo treatment effect, and sum those squared placebo errors over a grid of lambda values. That gives you a spreadsheet version of the leave-one-out cross-validation criterion.

Where Excel starts to mutiny is the low-rank outcome-model step. The exact paper estimates a weighted low-rank component using a nuclear-norm penalty. That is a coding exercise, not for Excel. You can mimic the idea with one or two principal components or with a hand-built factor proxy, but once you want the actual TROP estimator, open R or Python and spare yourself the damage.

What the spreadsheet can do is show you what that outcome model is chasing. Compare the treated units’ observed outcomes in column P to the untreated outcomes in N and then inspect columns K–M. The whole point of the low-rank component is to recover the common factor structure hiding inside that gap.

The easiest bias calculation in the sheet is simply

Because we know the data-generating process, we can do that directly.

But the paper’s own simulation logic is even cleaner: set the treatment effect to zero and ask which estimator best predicts the untreated outcome. You can mimic that by duplicating panel_data, replacing the observed outcome in column P with the untreated outcome from column N, and then rerunning the estimators. In that placebo sheet, the true ATT is zero by construction. The estimator’s average treatment effect is pure bias, and the RMSE of the estimated counterfactual against column N is the metric Athey et al. focus on most heavily.

That is the closest spreadsheet analogue to the paper’s main tables.

A last word

The point here is that the usual estimators make a narrower bet than TROP.

DiD bets on parallel trends. Synthetic control bets that the treated unit lies inside the donor span. SDID bets that balancing across both units and time is enough. Matrix completion bets that the low-rank outcome model is enough.

TROP hedges. It uses unit weighting, time weighting, and outcome modeling together, then lets placebo prediction error tell you how much to trust each margin.

That is what makes the estimator interesting. Not that it is complicated. Plenty of things are complicated. The interesting part is that it is complicated in the right place: the counterfactual. And that’s what we care about.